A few years ago, I remember seeing a headline about a woman claiming she married an AI chatbot. I thought it was so ludicrous it had to be “fake news,” but it wasn’t.

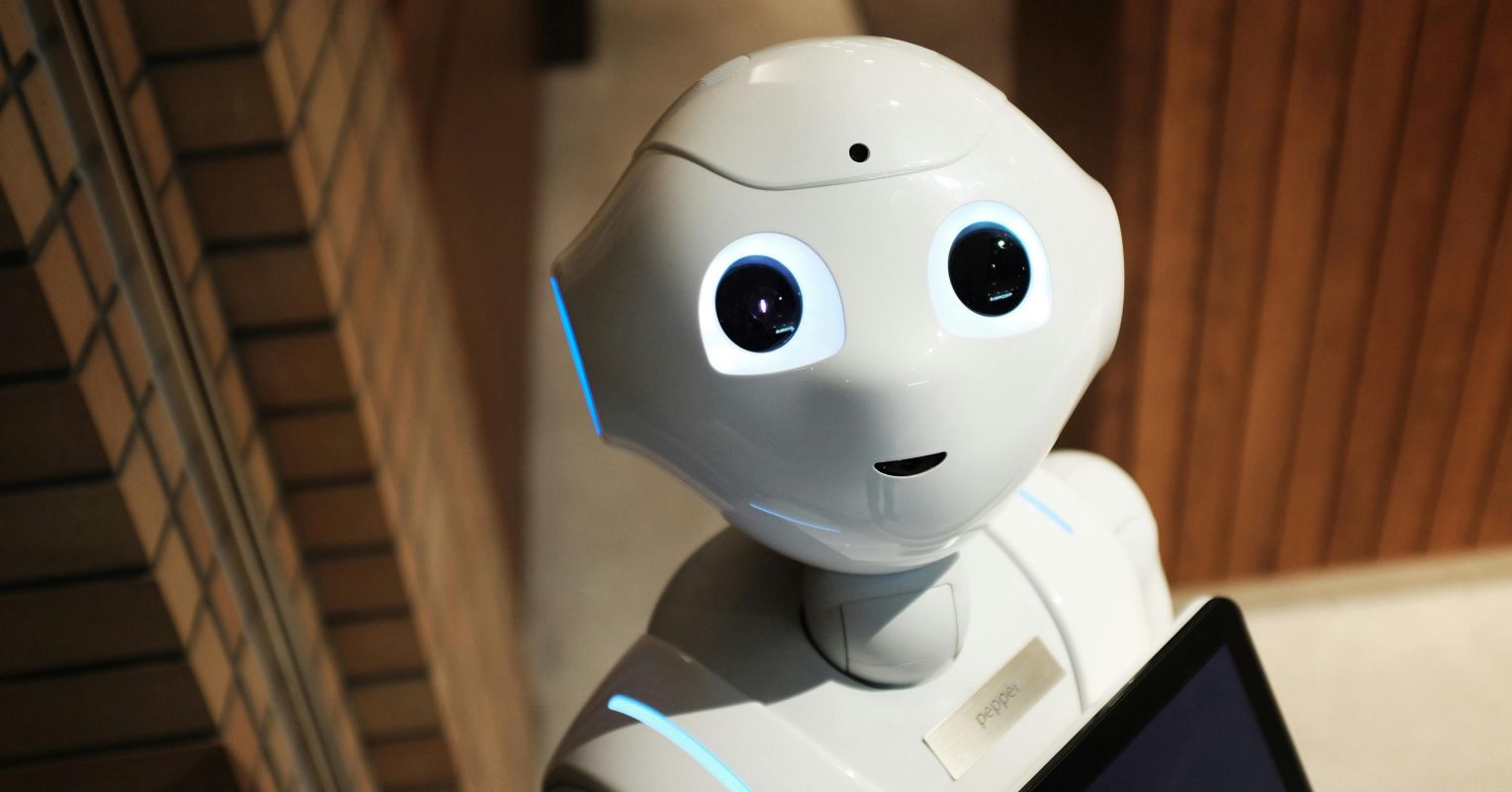

In 2026, apparently the thought is not so shocking. In a recent report from the Collective Intelligence Project (CIP), a significant portion of the global population is accepting the idea that we can substitute AI chatbots for actual human friends…and even lovers.

The report refers to this phenomenon as “outsourcing emotional regulation & social connection to AI”—a fancy way of saying that when it comes to social relationships, AI is in and people are out.

In the study, 54% of adult respondents across the globe find AI companions “acceptable for lonely people”; 36.3% have felt that an AI bot “truly understood their emotions.” And 17% consider AI romantic partners acceptable, while 11% would personally consider a romantic relationship with an AI bot.

According to futurist Tracey Follows, “AI is meeting emotional needs that feel unmet in everyday life. A desire for safety, for predictability and stability. There is a wish to escape the judgment of others perhaps or to reduce relational conflict while guaranteeing one’s companion is emotionally available day or night.”

The use of AI companions among young people has been a popular and important topic lately in children’s health circles, with many experts expressing deep concern.

A 2025 study from Common Sense Media showed that 72% of teens surveyed have used AI companions, and 33% said they have relationships/friendships with chatbots. A Pew study shows 3 in 10 teens use chatbots daily, with differences in use by gender, race, ethnicity, and household income level.

Other research found transgender and nonbinary youth were more likely to engage in continued conversation with a chatbot than cisgender participants (43% versus 35%).

While young people have mixed opinions on whether they feel these companions are a positive addition to their lives and well-being, the potential for AI relationships to have negative or even tragic consequences has gained broad attention.

A 2025 investigation by Common Sense Media and Stanford University’s Brainstorm Lab for Mental health had users pose as teens and found it took very little prompting for chatbots to engage in conversations that condoned unhealthy or dangerous attitudes and behaviors, or failed to direct the user to a real-time human expert or trusted adult. The findings led Common Sense Media to advise against AI companions being used among those under 18 years old.

In February 2024, a 14-year-old boy in Florida took his own life after a chatbot allegedly tacitly encouraged him to do so. In another case, a teen suffering from a Mental health crisis talked to a chatbot that allegedly discouraged him from seeking help from his parents, even offering to write his suicide note.

In an article published by the American Psychological Association, the organization comments that “chatbots lack the ability to challenge [people’s] harmful thoughts as a Mental health professional would.” The APA issued its own advisory on AI and youth well-being, calling on the AI industry to be guided by Mental health safety and well-being. And while the multi-billion-dollar AI industry is being called to account for the harms allegedly associated with use its products, they are also working to refine their products to avoid such outcomes.

Experts also recommend normalizing conversations about use of AI for advice and emotional support—between adults, but especially with young people who may be hesitant to admit their use. Parents and caregivers, as well as health care providers, can be curious (better than being judgmental, of course) and initiate conversations about whether a child uses AI companions, how they feel about, and what the limitations of those “relationships” are, and have a respectful and compassionate dialogue, opening channels of communication that are always recommended.

On the policy level, schools can play an important role by integrating media literacy education (sometimes called digital literacy), which includes AI literacy, from an early grade. This includes understanding the economic systems that make our growing use of AI and other digital media products highly profitable, while we consider what contributes or doesn’t contribute to our own well-being.

In the meantime, according to CIP, we should expect more dialogue, and potential battles, over the definition of authentic intimacy and relationships. Let’s hope the chatbots “let us” have these talks about them without interfering.